An Introduction to Digital Cryptography

(c) Richard Drummond 2001-2003. This article may be freely distributed as long as this

copyright notice remains attached. First published in Digital, December, 2001. Prepared for the web May, 2003.

Anyone who transmits information via the Internet should know whether their data is secure. How certain can you be that no-one's listening in on your communications? This article introduces the basics of digital cryptography and looks at some of its applications on the Internet.

Cryptography, the ancient art of encoding messages to render them unreadable to anyone but the intended recipient, has been revolutionized by modern computer technology. Conversely, cryptography has had a profound effect on how we use and relate to today's information systems. Strong cryptography, once the preserve of governments and the military, is now in common use on the web and in personal and domestic information appliances. Whenever you use your credit card online, or watch a DVD or make a call on a cell phone - whether you realize it or not - you are making use of encryption technology. And, as computing devices become a more pervasive and transparent part of everyday life, it is important to understand the limitations and weaknesses of cryptography and any implications this technology has on civil liberties, if we are to rely on such techniques to secure our privacy and digital identity.

The ABCs of Cryptography

Cryptography has been practised at least since classical times. Julius Caesar, for example, is credited with invention of a simple cipher system which he used to stop military intelligence falling into his enemies' hands. By today's standards, this so-called Caesar Cipher is hopelessly primitive, but since - in its application at least - it is similar to modern techniques, it serves as a useful example for explaining the principles of cryptography.

Suppose Bob wants to send Alice a message via some insecure medium, and, to ensure that such messages are unreadable by anybody else, Bob and Alice have agreed previously that any messages they exchange will be encoded using the Caesar Cipher. Note that the medium itself doesn't matter at all: it could be a hand-penned message written on parchment and carried on horseback or it could be ASCII text sent as an e-mail.

The message that Bob wishes to send is called plaintext, the process of encoding it known as encryption and the resulting encoded message called ciphertext. To create ciphertext from plaintext using the Caesar Cipher is a simple task for Bob to perform. He merely replaces each letter in the message with the letter in the alphabet three places to its right - wrapping around back to 'A' after the letter 'Z'. So, a letter 'A' in the plaintext becomes a letter 'D' in the ciphertext, a 'B' becomes an 'E', and so on, all the way up to 'Z' which is replaced by a 'C'. Having encrypted his message, Bob then sends the ciphertext on its way to Alice, who, on receiving it, applies the reverse process - known as decryption - and thus obtains the original plaintext message. To decrypt the encoded message, she replaces each letter in the ciphertext with the letter in the alphabet three places to its left. Hey presto, the original message is recreated!

Now, the Caesar Cipher is trivial to break, and, once broken, all future communication between Alice and Bob would be insecure. It doesn't take a huge leap of the imagination, however, for Alice and Bob to make things slightly more difficult for their enemies. With each message they wish to encrypt, they could vary the amount which they shift the letters by - rather than always shifting by a fixed amount of three. Here they are generalizing from the Caesar Cipher to a whole class of ciphers, a class defined by the cryptographic algorithm 'replace each letter in the plaintext with the letter n places to its right in the alphabet'. The number n in each application of this algorithm is known as the cryptographic key. To be able to decrypt the ciphertext now you need to know both the algorithm and the key (the value of n which was used to encrypt the message).

This 'shift right' cipher system is a simple example of what is called a block cipher. This is because the cipher system divides the plaintext message up into fixed size blocks and operates on each of these blocks in turn. In our case, the block size is determined by how we choose to represent each letter in the plaintext. If we were to use the familiar 8-bit ASCII encoding for text, for example, the block size would be 8 bits. Modern block ciphers use larger block sizes than this and, depending on the algorithm, will work with a fixed block size or one of series of supported block sizes; moreover, the operation performed on each block will be much more complex than our simple 'shift right' operation and will typically involve several 'rounds' or applications of the encrypting operation and may involve feedback from a previously encrypted block. The basic principle is the same, though.

Pretty Good Privacy

The first generally available application of strong cryptography for the safeguarding of personal privacy was Phil Zimmerman's PGP or Pretty Good Privacy (see http://www.pgp.com). PGP uses a combination of public and secret key algorithms for the encryption of general data and files such as e-mail, and for the creation and authentication of digital signatures.

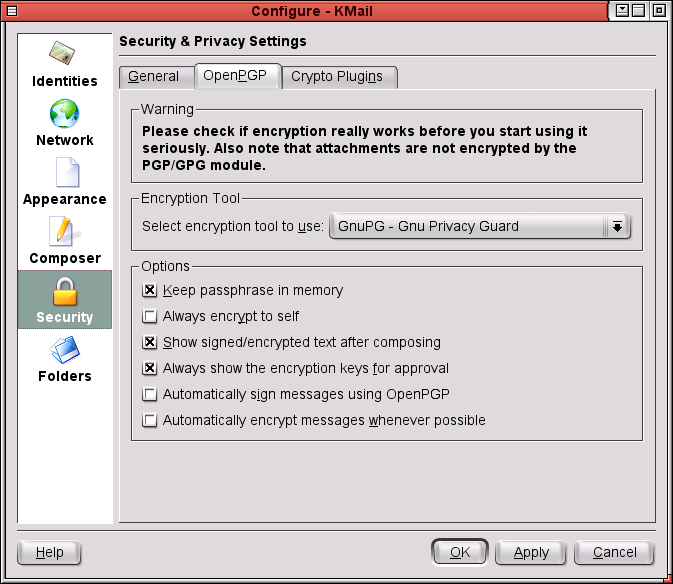

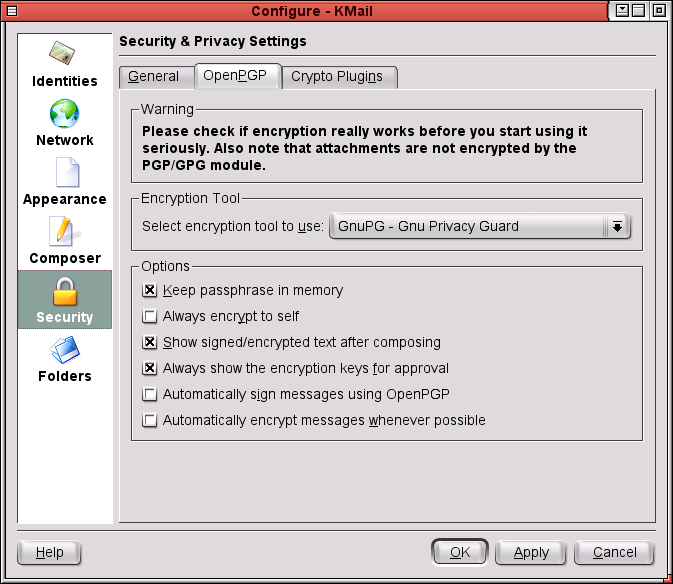

Despite being restricted from export from the US due to the draconian laws on cryptography in force at the time, PGP quickly became the de-facto standard for the encryption of e-mail. In fact, most modern e-mail clients directly support the use of PGP for the encryption and signing of e-mail.

PGP has always been free for personal use, but remains proprietary software, marketed by the company that Zimmerman set up. To create an open standard based on PGP, the IETF (the Internet Engineering Task Force) - the body which creates Internet standards - set up the OpenPGP working group. A popular PGP clone, based on the IETF's OpenPGP standard, is developed by the GNU project and is called GnuPG (see http://www.gnupg.org).

Public key cryptography

No matter how secure your encryption algorithm, conventional cryptographic methods all suffer one major drawback. Since the same cryptographic key is used for encryption and decryption, for Alice to be able to read a message that Bob sends, Bob must somehow give her the key that was used to encode the message. If this is performed over an insecure medium as well, then the key can easily be intercepted and the security of the system compromised without their knowledge.

This problem of key management was solved in 1976 with the

invention of public key cryptography by Whitfield Diffie and Martin Hellman

at Stanford University. Most modern e-mail clients support PGP or GnuPG for encryption and signing. In public key cryptography, two mathematically related keys are generated for each party wishing to communicate - a public key and a private key. A message encoded using a particular public key can only be decrypted using the corresponding private key.

Most modern e-mail clients support PGP or GnuPG for encryption and signing. In public key cryptography, two mathematically related keys are generated for each party wishing to communicate - a public key and a private key. A message encoded using a particular public key can only be decrypted using the corresponding private key.

For Bob to send a message to Alice using public key cryptography, Bob encrypts the message using Alice's public key. Bob can now transmit the ciphertext to Alice safe in the knowledge that only Alice's private key can be used to decrypt and read it. Since her private key is used for decryption, Alice needs to keep this safe; but because she doesn't need to give this private key to anybody for them to be able to communicate securely with here, this is relatively easy to do. She need to take no such precautions with here public key. In fact, anybody can have access to it. She can post it to here friends in e-mails or even publish it on the web.

Public key algorithms rely for their security on the fact that certain types of mathematical function are easy to perform in one direction but are difficult to do in reverse. For example, the popular RSA algorithm (named after its inventors Ronald Rivest, Adi Shamir and Leonard Adlemann) makes use of the factorization problem: while it's easy to multiply two large prime numbers together, it is vastly more difficult to factor that product back into two large primes. It is exactly this problem that would need to be solved for the code-breaker to be able to deduce an RSA private key form the corresponding public key. Other public key algorithms take advantage of other computationally hard problems, such as the discrete logarithm problem or elliptic curves.

Secure Socket Layer

SSL, or Secure Socket Layer, is the most popular standard for encrypting Internet traffic. It can in principle be used to protect any Internet application, but it was invented by Netscape to provide secure access to web pages. It has since become an open standard maintained by the OpenSSL group (see http://www.openssl.org).

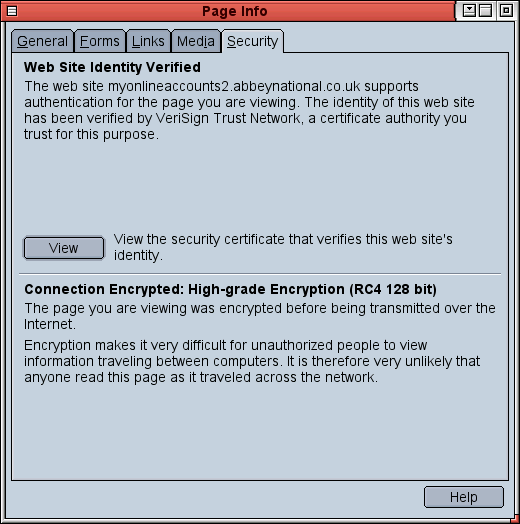

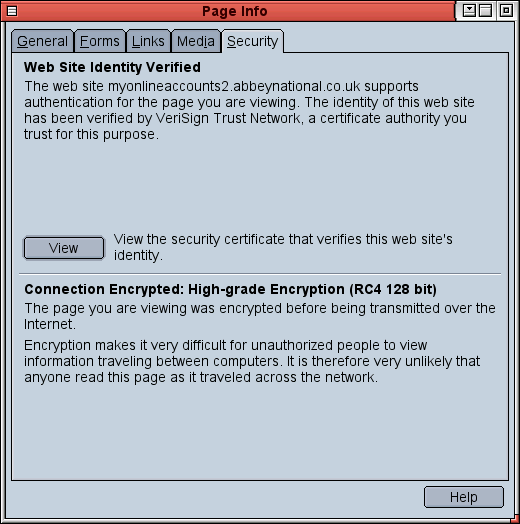

It is SSL that has allowed e-commerce to flourish on the web. Whenever you use the HTTPS protocol to log into a web server and your browser shows you the locked padlock symbol, you can be reasonably sure that whatever transaction you perform will be secure.

SSL provides security in two ways. It authenticates the web server using digital signatures (a server must have a certificate signed by a trusted body, typically a commercial Certificate Authority such as Thawte or Verisign) so that you know the server is run by the company it claims to be run by; and it encrypts the traffic between your browser and the server using an agreed-upon block cipher, thus ensuring that nobody else can eavesdrop on the communication.

Until recently, 40-bit or 56-bit block ciphers were the norm in SSL encryption, but these are potentially vulnerable to brute force attack, so the recent crop of "International" browsers support 128-bit cryptography and should be used where possible to guarantee security.

Cryptography and the Internet

The Internet and its most popular application, the World Wide Web, are completely public systems. Unless you take precautions, any information you send over the 'Net is potentially visible to all. Sending an e-mail, for example, is like posting a letter without an envelope: anybody can intercept the message and read its contents as it is routed towards its recipient. Similarly, when your web browser logs into a web server, anyone can listen in to the information that is exchanged between your computer and the remote server.

What, then, is the solution to security and privacy on the

Internet? The answer is public key cryptography. Web sites that employ the HTTPS protocol use SSL for authentication and encryption.It is public key cryptography that makes e-commerce on the web possible. It is used, for example, in the Secure Socket Layer (SSL) protocol which enables secure access to we pages. It is public key cryptography, as used in software like PGP (Pretty Good Privacy), that can safeguard the privacy of your e-mails and data. And it is public key cryptography which permits the creation of digital signatures, so that the authenticity and integrity of digital messages can be verified.

Web sites that employ the HTTPS protocol use SSL for authentication and encryption.It is public key cryptography that makes e-commerce on the web possible. It is used, for example, in the Secure Socket Layer (SSL) protocol which enables secure access to we pages. It is public key cryptography, as used in software like PGP (Pretty Good Privacy), that can safeguard the privacy of your e-mails and data. And it is public key cryptography which permits the creation of digital signatures, so that the authenticity and integrity of digital messages can be verified.

Most applications of cryptography on the Internet, such as SSL, work as an additional layer over existing Internet protocols. A more recent development, IPSec (Internet Protocol Security), is a secure replacement for the Internet Protocol (IP) using public key cryptography (IP is one of the fundamental Internet protocols and is responsible for the packaging and routing of information transmitted via the Internet). An increasingly popular application for IPSec today is Virtual Private Networking, the creation of a secure, private network on top of an untrusted, public network like the Internet. All data sent over a VPN is encrypted by IPSec, so the application software used to communicate over a VPN doesn't have to use or support cryptography for the data to remain secure.

Digital signatures

As well as for encrypting data, public key cryptography can be used to create digital signatures, a means of verifying the authenticity and integrity of a plaintext message or file. This signature can then be checked to ensure that the message or file was in fact created by the attributed author and that nobody has tampered with its contents since it was created. Digital signatures are widely used, for example, in the signing of software packaged for distribution on the Internet. They provide a level of confidence that the software you download hasn't been infected with a virus.

The first step in creating a digital signature is to use a cryptographic hash function - such as the popular MD5 algorithm - to generate a message digest or "fingerprint" of the message or file to be signed. This message digest is a sequence of bits (128 bits in the case of the MD5 algorithm) which uniquely or very nearly uniquely represents the message. A good hash function is designed to make it very difficult to generate another message which would hash to the same sequence of bits. Thus any modification of the message should result in a different message digest.

Next, the message digest is encrypted with the author's private key to create the message signature. Both message and signature are then distributed.

Suppose Alice wishes to verify that a digitally signed message she has received was indeed from Bob. She uses Bob's public key to decrypt the message's digital signature and so reveal the message digest. She then computes a fresh message digest for the message that she received and compares it to the one that was encoded in the signature. If they don't match, either the message wasn't actually from Bob or the message has been tampered with in some way.

The DES Algorithm

DES, or the Data Encryption Standard, was developed by IBM in the mid 1970s for use by the US government. DES uses a key size of 56 bits which, as explained in the main article, is prone to brute force attack; therefore, DES is no longer considered secure.

DES has proven remarkably resistant to cryptanalysis, however, and can be made secure by increasing the key size. TripleDES (sometimes called 3DES) makes three applications of the DES algorithm using two keys, thus upping the key size to a safer 112 bits. DES has recently been officially replaced by AES, the Advanced Encryption Standard.

How secure is cryptography?

A cryptographic system is only useful if it cannot be easily broken, but how can we gauge the resistance of a particular method to attack?

One measure of an algorithm's strength is the size of its keyspace, the set of all possible keys that can be used with that algorithm. For example, in our simple 'shift right' algorithm discussed earlier, the keyspace is the set of numbers from 1 to 25 (although we can shift by 26 letters, the resulting ciphertext would be identical to the plaintext, so we won't count that). If an algorithm's keyspace is too small, then it is possible to break the encryption by brute force: we simply try each possible key in turn and see if the ciphertext decrypts to a meaning plaintext message. With our simple example, this type of attack is trivial to perform, even without computer power, and the cipher is hopelessly insecure.

More sophisticated algorithms can also be open to brute force attack, however, especially with the readily available power of today's microprocessors. Algorithms that may have once been considered secure are safe no longer. For example, the DES algorithm - which has a keyspace of 256 (that is 2 multiplied by itself 56 times) which is roughly 7x1016 keys (or 7 followed by sixteen zeroes) - can be broken by brute force in a matter of hours by specialized devices. It was also broken by the distributed.net project - which utilizes the otherwise unused CPU cycles of hundreds of average PCs via the Internet - in under 24 hours in January 1999. The inexorable increase in processor power of the desktop PC makes this type of exhaustive search more and more accessible.

The term "strong cryptography" is applied to algorithms that, even when the algorithm is known, are not prone to brute force attack. Currently, for block ciphers a key size of 80 bits (a keyspace of 280) and above is considered secure for everyday purposes, while for public key systems such as RSA a safe key size is at least 1024 bits. These key sizes are considered safe simply because there is currently insufficient computing power in the world to exhaustively search such large keyspaces in a practical time.

To crack strong cryptography, more subtlety is required: one must look for ways to reduce the number of keys that it is necessary search. This typically requires complex mathematical analysis, or cryptanalysis, of the particular algorithm to find its weakness, and several algorithms have been shown to be insecure in this way.

Copy protection

Cryptography is not only used to safeguard our rights; it can be used to deny them, too. An example is the Digital Scrambling System (DSS) used to encode video DVDs. DSS uses a public-key cryptography system to ensure that video DVDs can only be played via officially licensed hardware or software. This means, for example, that it is impossible to legally create an open source program to view DVD movies on your computer. Many see this as contrary to fair use. Having bought a DVD, why can we not watch it on any device we wish?

The usual justification for DSS is that it is there to deter illegal copying of DVDs. In actual fact, it does no such thing. It is perfectly possible to copy an encrypted DVD - in the same way that it is possible to photocopy a page of ciphertext. The pirated copy will still only be able to be played on a device equipped with an appropriate decryption system.

DSS was cracked in 1999, and now unlicensed software which will play encrypted DVDs is generally available on the web for free. Using such software is illegal in many countries, however, including the USA, where it contravenes the Digital Millennium Copyright Act.

Implications

Governments are naturally wary of strong cryptography, because it weakens their powers to eavesdrop on their enemies and on their citizens. The usual argument is that governments and law enforcement bodies need to keep tabs on criminals, terrorists and foreign powers - something that they cannot easily do when strong cryptography is freely available.

Traditional measures have been to limit the exportation of strong cryptographic technologies, but with the advent of e-commerce this is no longer a practical or effective remedy. Alternative proposals - such as a key escrow scheme (where to be able to legally use public key cryptography, you must register and hand over a copy of your private key to the government) or "backdoors" to encryption, such as the Clipper chip proposed by the Clinton administration in the US - have been shelved thanks to the lobbying of civil liberties groups such as the Electronic Frontier Foundation (http://www.eff.org). After all, why should we trust the government not to misuse such powers? What's more, any such central repository of keys - as proposed by the escrow scheme - would be a prime target for criminal attack. However, with the recent outbreaks of terrorist activity, many fear that both the US and the UK governments will once more try to push through legislation to limit the use of strong cryptography.

The fact is that everybody has a legitimate use of strong cryptography, even it is only to secure their purchases over the Internet. The next time you log in to Amazon.com to buy the latest bestseller, have a think about how cryptography is being used to protect your privacy and to protect you against fraud. Do you really want to surrender that protection to the government?

Most modern e-mail clients support PGP or GnuPG for encryption and signing. In public key cryptography, two mathematically related keys are generated for each party wishing to communicate - a public key and a private key. A message encoded using a particular public key can only be decrypted using the corresponding private key.

Most modern e-mail clients support PGP or GnuPG for encryption and signing. In public key cryptography, two mathematically related keys are generated for each party wishing to communicate - a public key and a private key. A message encoded using a particular public key can only be decrypted using the corresponding private key.